In moments of technological frustration, it helps to remember that a computer is basically a rock. That is its fundamental witchcraft, or ours: for all its processing power, the device that runs your life is just a complex arrangement of minerals animated by electricity and language. Smart rocks. The components are mined from the Earth at great cost, and they eventually return to the Earth, however poisoned. This rock-and-metal paradigm has mostly served us well. The miniaturization of metallic components onto wafers of silicon — an empirical trend we call Moore’s Law — has defined the last half-century of life on Earth, giving us wristwatch computers, pocket-sized satellites and enough raw computational power to model the climate, discover unknown molecules, and emulate human learning.

But there are limits to what a rock can do. Computer scientists have been predicting the end of Moore’s Law for decades. The cost of fabricating next-generation chips is growing more prohibitive the closer we draw to the physical limits of miniaturization. And there are only so many rocks left. Demand for the high-purity silica sand used to manufacture silicon chips is so high that we’re facing a global, and irreversible, sand shortage; and the supply chain for commonly-used minerals, like tin, tungsten, tantalum, and gold, fuels bloody conflicts all over the world. If we expect 21st century computers to process the ever-growing amounts of data our culture produces — and we expect them to do so sustainably — we will need to reimagine how computers are built. We may even need to reimagine what a computer is to begin with.

Starting from Slime

It’s tempting to believe that computing paradigms are set in stone, so to speak. But there are already alternatives on the horizon. Quantum computing, for one, would shift us from a realm of binary ones and zeroes to one of qubits, making computers drastically faster than we can currently imagine, and the impossible — like unbreakable cryptography — newly possible. Still further off are computer architectures rebuilt around a novel electronic component called a memristor. Speculatively proposed by the physicist Leon Chua in 1971, first proven to exist in 2008, a memristor is a resistor with memory, which makes it capable of retaining data without power. A computer built around memristors could turn off and on like a light switch. It wouldn’t require the conductive layer of silicon necessary for traditional resistors. This would open computing to new substrates — the possibility, even, of integrating computers into atomically thin nano-materials. But these are architectural changes, not material ones.

For material changes, we must look farther afield, to an organism that occurs naturally only in the most fleeting of places. We need to glimpse into the loamy rot of a felled tree in the woods of the Pacific Northwest, or examine the glistening walls of a damp cave. That’s where we may just find the answer to computing’s intractable rock problem: down there, among the slime molds.

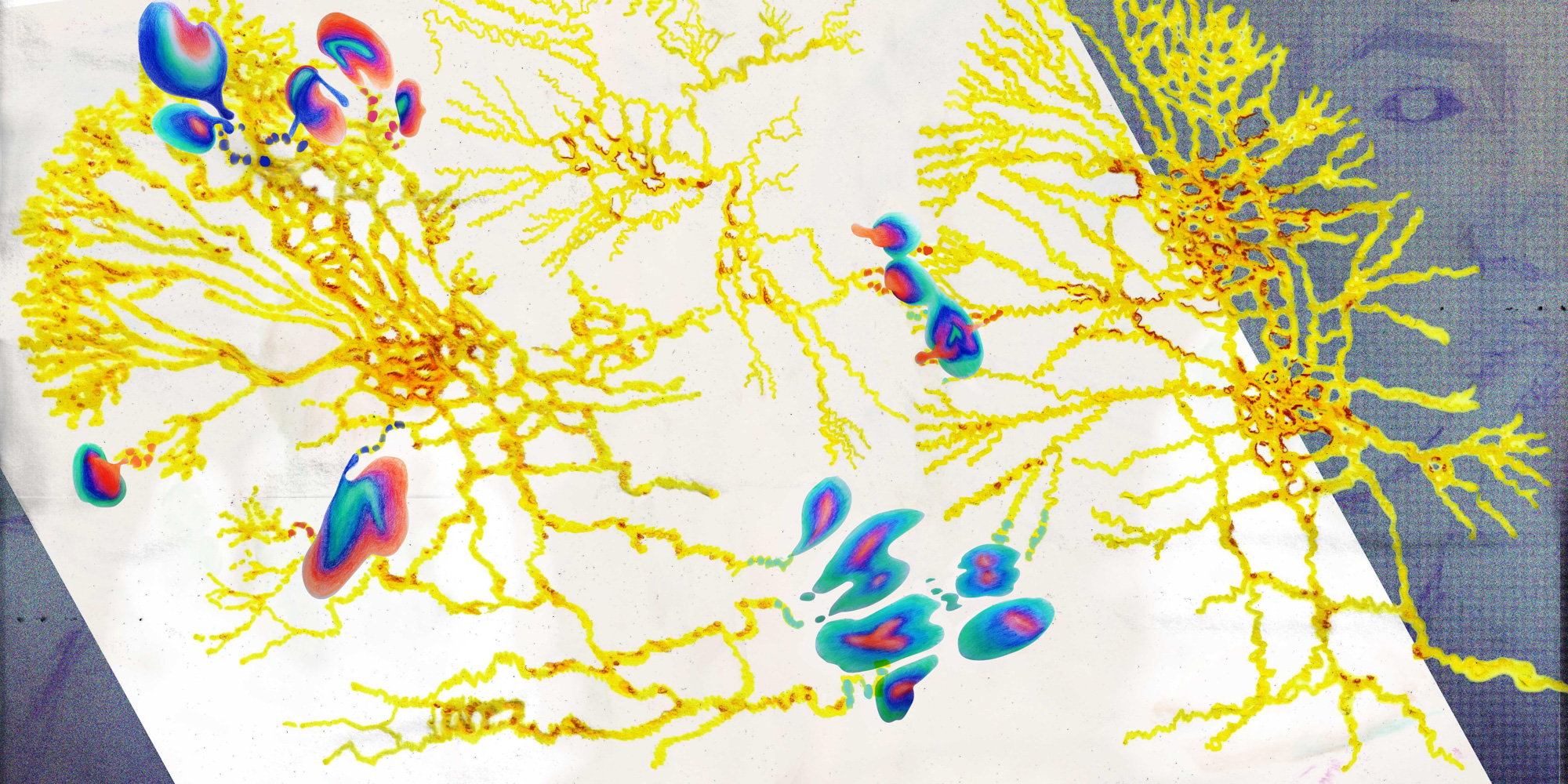

Slime molds are way ahead of our computational speculations. Take memristors: in 2014, a group of researchers at the University of West England discovered memristive behaviors in the many-headed Physarum polycephalum, a primitive but compellingly intelligent slime mold. Slime molds aren’t fungi, nor are they animals; at different points in history, they’ve been classified both ways, earning them the latin name Mycetozoa, or fungal animal. That a slime mold could act as a living memristor — regulating the flow of electricity through a circuit and “remembering” electrical charges — is remarkable, but it’s not unique in the natural kingdom. Scientists have observed these behaviors in the sweat ducts of human skin, in flowing blood, and in leaves. A 2017 study concluded that, most likely, “all living and unmodified plants are ‘memristors,’” proof that Mother Nature anticipates even our cleverest speculations. She may even dictate the next computational frontier, after quantum and memristive computers have arrived and gone.

Biological systems not only anticipate, but excel at certain thorny computational tasks. In one experiment, researchers released a Physarum polycephalum slime mold on a topographical relief map of the United States. They placed it on the West Coast, on the Oregon coastal town of Newport, and placed a pile of oat flakes — the slime mold’s favorite food —at the other end of the country, in Boston, Massachusetts. The mold shot out protoplasmic tubes, searching for an efficient path towards the oat flakes it sensed via airborne chemicals. After five days, the mold reached Boston, cutting across the country while avoiding mountainous terrain. You may recognize its path if you’ve ever road-tripped from Oregon to New England: the slime mold charted Route 20, the longest road in the US.

Physarum polycephalum is an expert at such tasks. Its sensing, searching protoplasmic tubes can solve mazes, design efficient networks, and easily find the shortest path between points on a map. In a range of experiments, it has modeled the roadways of ancient Rome, traced a perfect copy of Japan’s interconnected rail networks, and smashed the Traveling Salesman Problem, an exponentially complex math problem. It has no central nervous system, but Physarum is capable of limited learning, making it a leading candidate for a new kind of biological computer system — one that isn’t mined, but grown. This proposition has entranced researchers worldwide and attracted investment at the government level. An EU-funded research group, PhyChip, hopes to build a hybrid computer chip from Physarum, by shellacking its protoplasmic tubes in conductive particles. Such a “functional biomorphic computing device” would be sustainable, self-healing and self-correcting. It would also be, by some definition, alive.

This unorthodox hybrid of computer science, physics, mathematics, chemistry, electronic engineering, biology, material science and nanotechnology is called Unconventional Computing. Professor Andrew Adamatzky, the founder of the Unconventional Computing Laboratory at the University of the West of England, explains its ethos: “to uncover and exploit principles and mechanisms of information processing in…physical, chemical and living systems” in order to “develop efficient algorithms, design optimal architectures and manufacture working prototypes of future and emergent computing devices.” In short, Unconventional Computer scientists build computers not from rocks and sand but from the nutrient-seeking protoplasma of slime molds, among other natural materials.

If you’re looking for a computer — even if you’re looking under a rock — a computer is what you’ll find.

Professor Adamatzky proposes that we will someday be “close partners” with slime mold, harnessing its behavior to grow electronic circuits, solve complex problems, and better understand mechanics of natural information processing. Over the last decade, his lab has produced nearly 40 prototypes of sensing and computing devices using Physarum polycephalum. He has recently shifted his interests to more widely available fungus, finding that fungal mycelium — the complex, branching filaments that spread below ground before sprouting up the fruiting bodies we know as mushrooms — can solve the same kinds of computational geometry problems as slime mold. The Unconventional Computing Lab recently received funding to develop “computing houses” out of mycelium, “functionalizing” the fungal matter to react to changes in light, temperature and pollution. Adamatzky’s view is expansive. “Everything around us will be a computer and interface results of the computation to us.”

We are left to imagine computer chips bristling with energy and life, laced with the unusual branching filaments of protoplasmic tubes, and monolithic buildings, grown in-situ by computationally-active mycelial networks, adapting, searching, self-repairing, sensing “all what human can sense.” One science fiction story published in this magazine gives us a utopian (and later a dystopian) vision of such a future: “Everything we touched was alive. Each morning I woke up in a bed made of mushroom, covered in sheets of fresh spider silk. The limbs of our home opened with sunrise….Instead of a cell phone, I gazed at a beautiful organic ecosystem with fluorescent proteins arranged to display the news. My teeth stayed clean naturally, a self-balancing ecosystem consuming the excess sugar from my diet. Everything, absolutely everything, was alive.”

A Very Different Kind of UX

Switching from silicon to slime is a transformative idea. For me, the very question feels radically hopeful: might building computers from slime molds and mushrooms transform computing from a sophisticated solipsism into a far more sophisticated expression of our awe-inspiringly complex, interconnected world? Certainly it would change our whole relational experience of computing. It might also be more sustainable, as biological computer systems would consume far less energy than traditional hardware and, at the end of life, be completely biodegradable. ”We can shut down our PC completely,” Adamatzky explains, “but we will never shut down a living fungi or a slime mould without killing it.” Forget planned obsolescence.

The research folders on my very rock-based computer are crammed with papers on plant leaf computing; computing driven by the billiard ball-like collisions of droplets and marbles; the problem-solving algorithms of lettuce seedlings; computing systems built around the behavior of blue soldier crabs, rushing between shade and sunshine on a beach. The sheer multiplicity of approaches is enough to make you think that computing is not so much an industry as a way of seeing — an interpretation of the world. “If we are inventive enough, we can interpret any process as a computation,” Adamatzky says. If you’re looking for a computer — even if you’re looking under a rock — a computer is what you’ll find.

The artist and critic James Bridle, in his book New Dark Age, describes “computational thinking” as the unique mental disease of the twentieth century, arguing that massively powerful, seductive calculators reformed our world in their image. In making data-processing machines, we turned our world into data, and “as computation and its products increasingly surround us…so reality itself takes on the appearance of a computer; and our modes of thought follow suit.”

Training Artificial Intelligence models on large datasets, for example, we often make the erroneous assumption that our future progresses as some predictable extrapolation of our past, without taking into account the many external factors that determine how humans behave, react, and make choices. In the process, we reproduce and codify historical biases, obliterating any chance we might have of learning from our mistakes. These kinds of errors, Bridle argues, are a consequence of trying to smooth reality’s edges to fit into the inflexible world-model of the computer, reducing all our nuances and contradictions to mere data. Perhaps if our computational systems were built from the Earth up to model the ways nature processes information, we wouldn’t need to jam a square peg into a round hole.

It’s radical, but not impossible. Computing paradigms are hardly set in stone. In the 1950s, electrical analog computing was standard, and today we live in a digital world. Only twenty years ago, quantum computing was science fiction, and today it’s being actively developed by Intel, IBM, Microsoft, and Google, among tech titans worldwide. A similar process might well unfold with biological systems. “Unconventional computing is a science in flux,” Adamatzky says. “What is unconventional today will be conventional tomorrow.”

Of course, the natural world is more complex than slime molds and lettuce seedlings. These are only the simplest systems that can be studied and manipulated in a laboratory environment. The real world — the living world — is unpredictable, tenacious, and soulful, a humbling entanglement of mutual need. What we call “nature” is a concert of behaviors and processes entirely coeval with the organisms running them, each connected further to an unimaginable multitude of other behaviors and processes, the whole system regenerative, seamless, self-correcting, magnificent.

It’s hard to imagine that we will ever succeed in building a computer system as brilliantly complex as the interrelation of fungal mycelium, far-reaching tree roots, and soil microorganisms in your average healthy forest, what scientists call the “wood wide web.” Smart devices, connected to one another through cloud-based servers vulnerable to cyberattack and plain old entropy, could never do this. And perhaps this is the real reason fully biological computers may remain always beyond our grasp. Even now, as we dream of embedding artificial intelligence into every material surface of our lives, we are at best poorly emulating processes already at play beneath our feet and in our gardens. We’re making a bad copy of the Earth — and, in mining the Earth to create it, we are destroying the original.